First, the AI-generated TLDR

This document discusses the potential impact of new AI models, such as GPT4, on AI-driven products. The author argues that companies need to be thoughtful about investing time in building optimization tricks, as the technology moat they build may be worth nothing in a few months. The author also suggests that AI augmentation is a feature, not a product, and that the way to beat incumbents is to build a radically different user interface to solve user needs.

Your product is an API that produces high quality, high-resolution AI-generated images. The API is used in all different kinds of products: a comic book creator, a e-commerce product image editor, a headshot creator, etc.

You use Stable Diffusion under the hood. But Stable Diffusion has many problems. It often produces images that have deformed faces or hands, sometimes the images are missing the subject, sometimes it's not the requested style, etc. But you solve these problems with a bunch of clever tricks. You run really fast servers that produce tons of images, then you toss out the bad ones using some heuristics, improve the good ones with edits and filters and then return just the best images. This is a very useful service.

If I didn't use your service, I'm not sure how I could offer a reliable headshot creator to my customers without investing tons of time building all of this myself.

But one day, Stable Diffusion 3.0 comes out, and out of the box, just produces amazing images. No more deformed faces. No more missing subjects. Now, you could just get rid of all the clever tricks you've built and just offer a raw API to Stable Diffusion 3.0. Maybe, your users wouldn't care and they would still be happy to pay you. Or maybe, they would realize it was easy enough to spin up Stable Diffusion 3.0 on AWS (half a day of work with GPT5), then they could improve their margins and get more flexibility than your API can provide. I can't predict what exactly will happen, but it's a risky position to be in as a company.

This scenario highlights the core question for many AI-driven products: If a better model comes along, will people continue to use your product?

GPT4

GPT4 came out last week. The “deformed hands” analogue for transformers is the small context window. When I built autocommit, a tool that generates commit messages for based on your current Git diffs, I wrote:

“A few of the diffs also exceeded the 4000 token context window of davinci-003. I’m simply truncating until the prompt is under that limit. We could definitely do something smarter here.”

Well, I’m sure glad I didn’t waste any time getting around the 4k context window. A lot of people (like Langchain) have spent a lot of time and effort trying to get around the small context windows. But then along comes GPT4 with a 32k (!) context window.

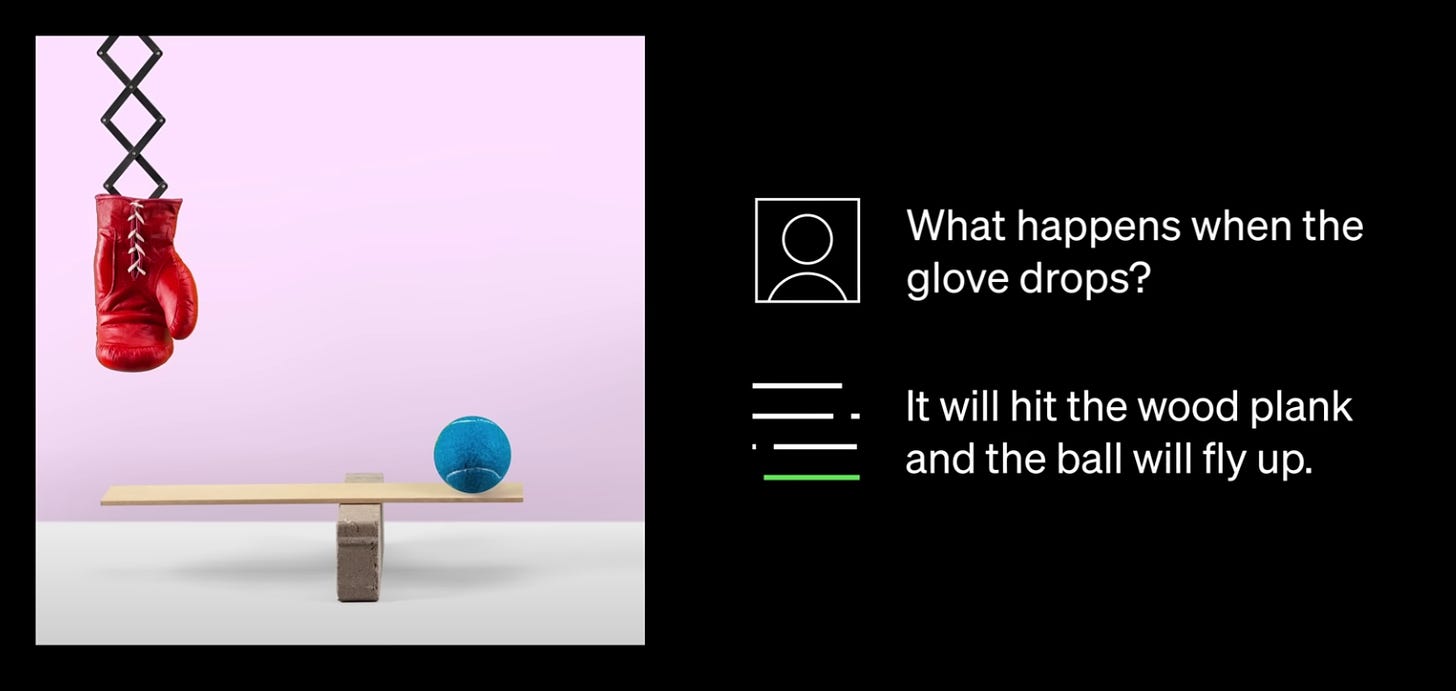

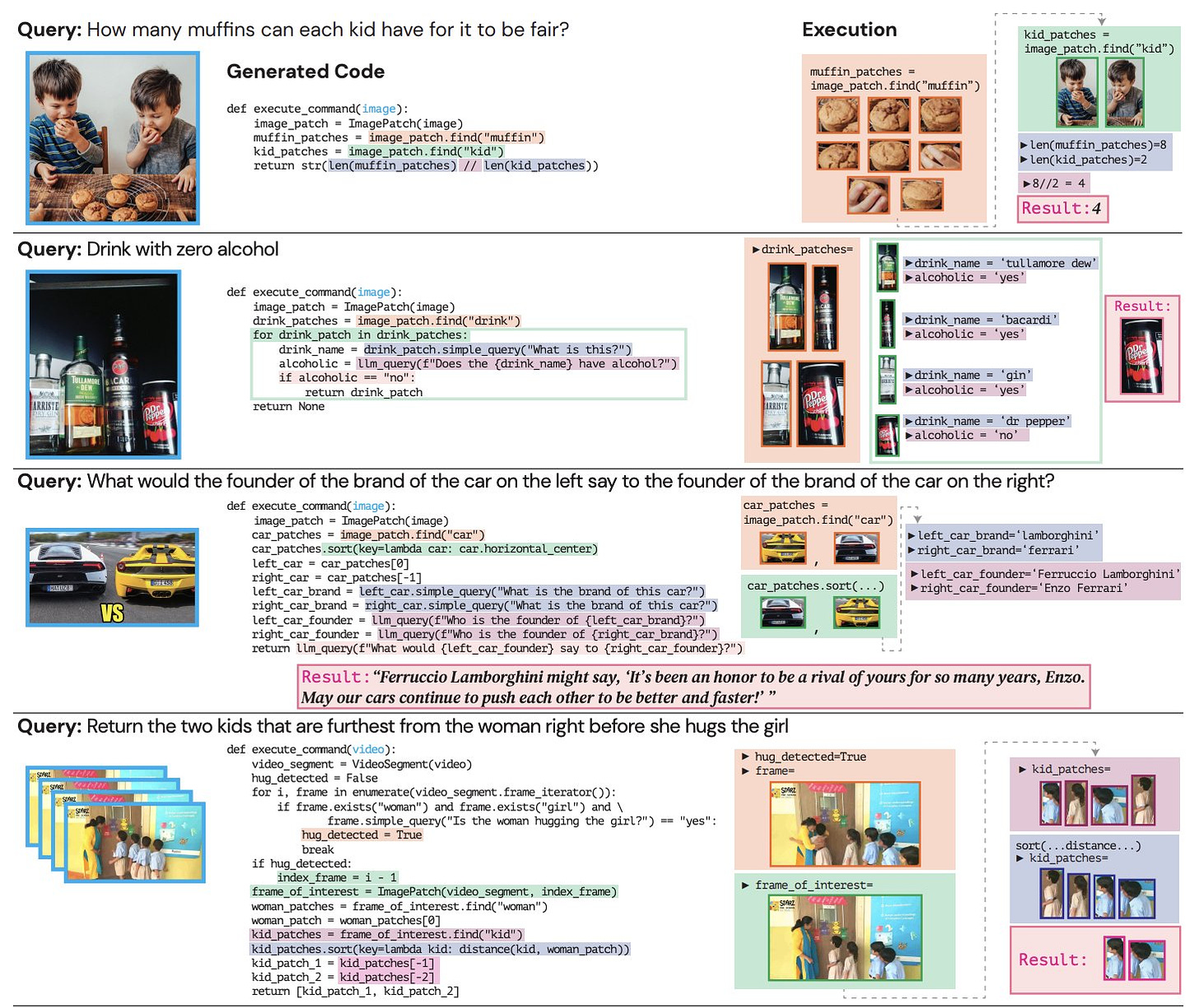

GPT4 is also multimodal so you can upload an image, and ask it questions about the image.

Funnily enough, a project with a similar goal called ViperGPT was released this week as well. It recursively prompts GPT3 to generate and runs some Python code to answer questions in images. Super cool research for sure but already obsoleted by GPT4.

Late last year, I was building an app called Beacon, a Mac app sorta like Spotlight or Recast to allow you to use ChatGPT in any Mac app.

I spent a lot of time working with Mac OS accessibility APIs to read your current selection and screen. However, with GPT4's ability to read and understand screenshots (and screen recordings), achieving the same is much simpler.

I could go on about things that have been obsoleted by GPT4 but I’ll stop with just one more: data connectors supported by GPT index. Why deal with APIs and all that if you could just use screenshots?

To sum up, if you need to build a product immediately and must work around edge cases and optimize accordingly, go ahead. But with the pace at which base models are improving, it's essential to be thoughtful about where you invest your time. The technology moat you build here might be worth zero in a few months.

What is a product worth building?

In the scenario at the start of this post, one of the customers of the API product is a comic book creator app (Company A). Will they be negatively affected by a better model? I don't know much about comic book creator apps. But let's make a rather plausible assumption for the purpose of this argument, that there just aren't very many comic book creators prior to the advent of AI image generation because of how cumbersome it would have been to draw each page of a book.

So, this is a product space with no incumbent. And Company A built an app that lets people build comic books easily - something that was never before possible.

Now with Stable Diffusion 3.0 being released, they can delight their users further with higher quality images and it’s a simple switch from the API. In many ways, it’s better than using the API we described earlier. Say it’s faster to not make an extra API call.

The users don’t know or care about SD 3.0, they just love that the app lets them create amazing comic books.

AI augmentation is a feature, not a product

And then, there are products like Lex, a text editor where you can use AI to create and edit content. I don’t mean to pick on them but they are a great case in point here. At first, Lex was an amazing experience because it allows you to write articles with AI. It didn’t have all the features of Notion or Word but you’ll put up with it because it’s just so damn helpful with writing and producing content. But all they are is a thin wrapper around the base model (GPT3). The features they’ve built are easy to copy and the core value prop of the product comes from the base model.

As of last week, Google Docs, Microsoft Word and Notion have all integrated the AI features into their product. The incumbents can integrate AI faster than Lex can grow a user base or build a full fledged text editor. The incumbents can easily win if all you are doing augmenting an existing product with AI.

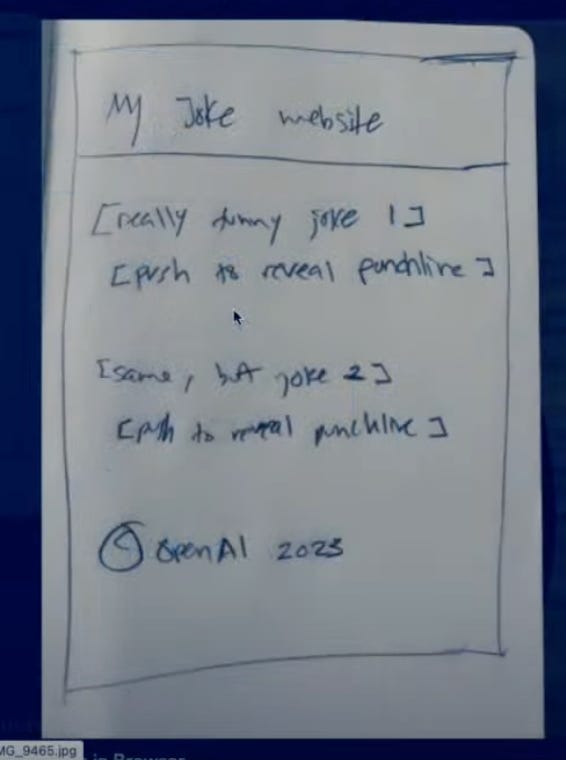

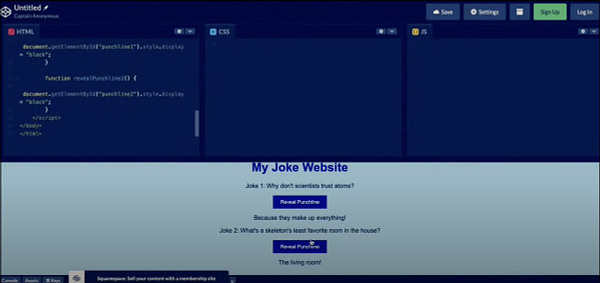

Another example is Genius which is copilot for Figma, it’s a really cool product that solves an immediate need for designers and improves designer productivity. But what if the future is a world where we don’t need to design mockups? Instead, we just go straight to working code. Draw a mock-up on a napkin and get HTML code.

However, I do believe there is a way to beat the incumbents. I discussed this in an older post titled "AI in every app or AI-native apps?". What if you create a completely different user interface to solve the same user needs as current products? For example, Descript is a video editor where you edit videos by editing words in the video, similar to editing a document. This can be 10 times faster than dealing with a timeline editor for many use cases.

The reason why Adobe Premiere or iMovie cannot build this different user interface is that their current customers rely on the existing UI. They cannot upset them by making a radical shift, especially if the new UI does not work for all the various use cases that people rely on iMovie for. They could add a new tab in their product or release an entirely new product, but at that point, the playing field is more level. The incumbency advantage of having an existing user base is greatly diminished in that scenario.